How the Nozzle Becomes a Gripper

A standard 3D printer has a hotend nozzle and a heated bed — neither of which are useful for picking up components. The key modification is a custom toolhead mounted alongside the original hotend on a stepper-driven rail:

- The original hotend nozzle and heated bed are not used for printing. The printer is repurposed purely as a precision XYZ gantry.

- A vacuum nozzle (thin tube connected to a vacuum pump) is mounted on the toolhead. When the pump turns on, suction grips the component from above.

- A TMC5160 stepper motor on a rail switches between three tool positions: LEFT (camera clear), MIDDLE (BLTouch probe), and RIGHT (vacuum nozzle aligned for pickup).

- The BLTouch probe measures exact Z height of each component so the vacuum nozzle knows precisely how far to descend. Without this, the nozzle would either miss the component or crash into the board.

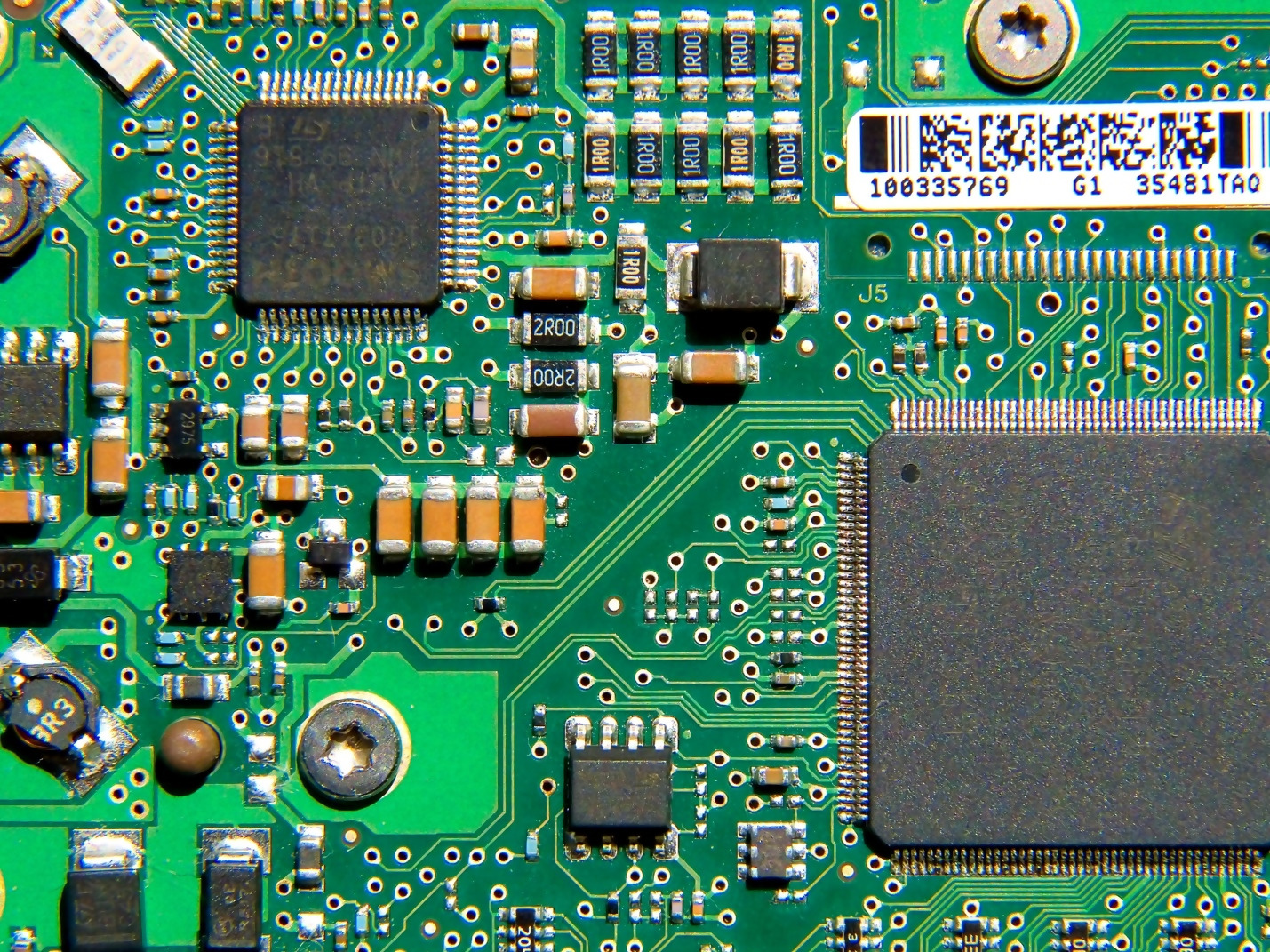

The heated bed stays off — the PCB sits flat on the unheated bed surface, held in place by its own weight. The bed provides a flat, known reference plane for the coordinate system.